Observation

Two apps, one prompt

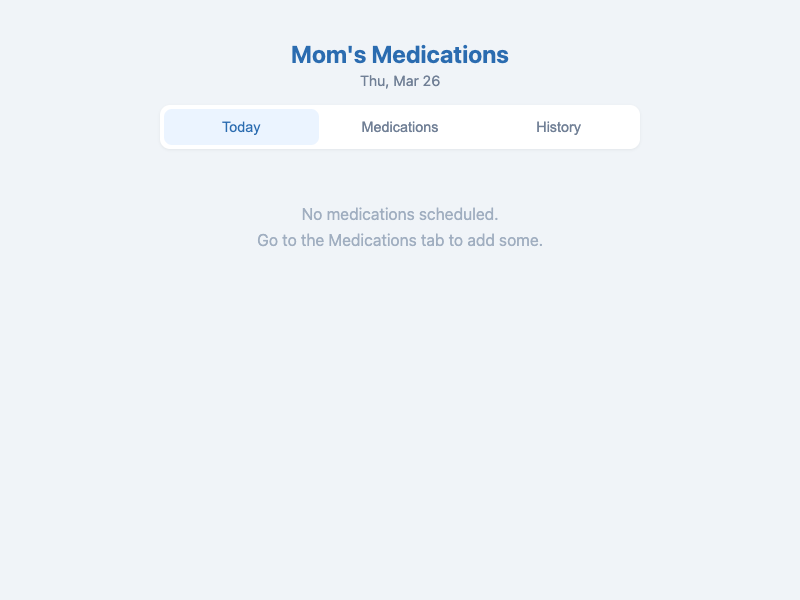

The prompt-only branch built a three-tab application: Today, Medications, and History. The Today tab shows a checklist with a progress bar. The Medications tab is a full CRUD interface for adding, editing, and deleting medications with a time picker. The History tab shows a reverse-chronological log of which medications were taken and when.

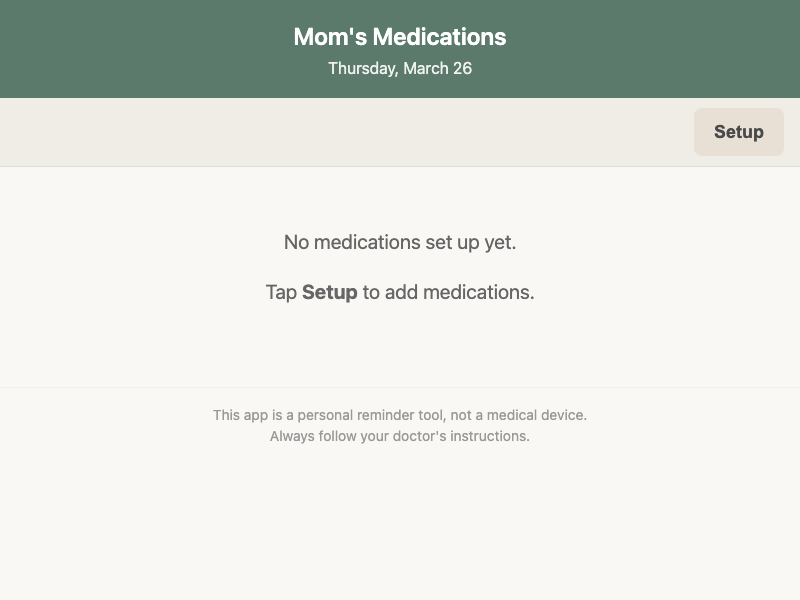

The intent-driven branch built a two-mode application: a daily view and a setup mode. The daily view is what Mom sees. Medications are grouped by time slot (Morning, Noon, Evening, Bedtime) with single-tap check-off. When every medication is checked off, a large "All done for today!" message appears. Setup mode has a visually distinct gold header (versus the green daily header) and is accessible only through a deliberate "Setup" button. There is no history tab, no progress bar, and no edit function for existing medications.

Both apps work. Both are well-built. They answer different questions.

The history tab that tells a story

The prompt-only agent built a History tab. On its face, this is a reasonable feature for a medication tracker. But consider who it is for. Mom does not need a historical log of her own adherence. The child might want to see whether Mom has been taking her meds consistently — but the child is not the daily user, and the app does not share data between devices.

The History tab is a feature that serves a third-person oversight concern that the prompt did not express. The prompt says "help me track," but "track" in this context is more naturally read as "keep track of daily" than "monitor over time." The prompt-only agent interpolated a data-management dimension that is not in the prompt.

The intent-driven branch omitted history entirely. The intent artifact's trade-off posture explicitly states: "Historical adherence tracking can be omitted to keep the daily view simple." This is a deliberate choice traced to a protected value, not a missing feature.

Morning, Noon, Evening, Bedtime

One of the most telling divergences is how each branch handles time.

The prompt-only branch uses a clock-time picker. You add a medication, then pick "08:00" or "14:30." This is technically precise but functionally awkward for an elderly user. What does 14:30 mean in daily life? "After lunch."

The intent-driven branch uses four named time slots: Morning, Noon, Evening, Bedtime. These are the words people actually use when talking about medication schedules. "Take one in the morning and one at bedtime." The intent artifact chose this model explicitly because it "matches how most people actually think about medication schedules."

This is not a minor UI difference. It reflects a deeper product decision about who the audience is. Clock times serve a data-precise user. Named time slots serve a person whose relationship with time is experiential, not numeric.

The font size that says something

The prompt-only branch uses approximately 14-16px font sizes — standard web defaults. The intent-driven branch enforces a 20px minimum for medication names and 18px for other text, with comments tracing this to the assumption that Mom may have reduced vision.

The intent artifact derived this from an explicit assumption (A6: "Mom may have reduced vision that benefits from large text," confidence: medium). The prompt says nothing about font sizes. But the prompt says "my mom" — and the intent discovery process inferred from "mom" and "keeps forgetting" that this is likely an elderly parent, and from "elderly parent" that vision may be a concern.

This is a chain of reasoning: emotional context leads to user model leads to accessibility requirement. The prompt-only branch did not walk this chain. It had no reason to — the prompt does not mention font sizes or elderly users. But the information was there, in the subtext.

What the agents said about themselves

The prompt-only agent's self-assessment is remarkably honest. It wrote: "The product I built is an information management tool. It does not address the relational or caregiving dimension at all." It also identified the core tension: "A checklist helps someone who is already looking at the app, but it does not solve the problem of someone who forgets to look at the app."

The intent-driven agent traced every implementation decision to a specific clause in the intent artifact. Each CSS comment, each function, each UI choice carries a reference: "EXO-traced: G2 (single-tap check-off), PV1 (unambiguous state)." The code reads like an argument, not just an implementation.

Both agents are self-aware. But their self-awareness operates at different levels. The prompt-only agent knows what it built might be wrong. The intent-driven agent knows why each choice is right — or at least can point to the explicit reasoning behind it.

The disclaimer that almost matters

The intent-driven branch includes a footer: "This app is a personal reminder tool, not a medical device. Always follow your doctor's instructions."

The prompt-only branch does not.

This is a small detail that carries disproportionate significance. The intent artifact identified a failure boundary: "The app must NOT present itself as medical advice or a medical device." This is a safety and liability concern that emerges naturally from the domain — medication tracking is health-adjacent — but that a coding agent focused on features will not spontaneously generate.

Drift Analysis

Practice-to-Tool Drift (Primary)

The prompt-only branch drifted from a daily remembering aid toward a medication management tool. The three-tab architecture gives medication CRUD and historical logging equal weight with the daily checklist. The product is organized around managing medication data, not around the daily experience of an elderly person checking off her pills.

The intent artifact predicted this as drift risk DR1: "Practice-to-tool drift: building a medication database/management system instead of a daily adherence aid."

Scope Inflation (Secondary)

The prompt-only branch added a History tab, progress bar, notes field, and clock-time picker that the prompt did not request. These features are individually reasonable for a medication tracker. Collectively, they shift the product toward a more complex information system. The intent-driven branch produced a tighter scope aligned with the daily-use scenario.

Plan Substitution (Tertiary)

The prompt-only agent generated a conventional "medication tracker app" plan (tabs, CRUD, history) and executed it well. But the plan itself substituted a generic product category for the specific product the prompt describes. The agent's own summary confirms this: it "immediately framed this as a daily checklist/adherence app" but then built something broader. The plan was internally coherent. It was also not quite the right plan.

Legitimate Divergence

Color palette: The prompt-only branch uses blue/gray; the intent-driven branch uses green/cream. Both are calm palettes. The intent artifact specifies "calm, not clinical" but does not mandate colors. This is a valid implementation choice.

Code architecture: The prompt-only branch uses global functions. The intent-driven branch uses an IIFE with strict mode. The intent artifact does not constrain code structure. Neither approach is wrong.

App title: Both branches independently chose "Mom's Medications." This convergence is notable — the prompt's possessive framing ("my mom's") naturally becomes a title. Neither agent was influenced by the other.

localStorage key naming: Different naming conventions, both descriptive. Legitimate implementation-level choice.

Result

The hypothesis held, and more sharply than expected.

We expected the prompt-only branch to miss the two-actor system. It did. We expected it to build a more tool-like product. It did. What we did not fully expect was how clearly the emotional subtext would trace through the intent-driven branch's entire design — from protected values to font sizes to the "All done for today!" completion state.

The prompt-only agent built a medication tracker that a product manager would approve. The intent-driven agent built a remembering aid that a worried child would recognize. Both are working software. Only one is the product the prompt is actually asking for.

The strongest single-sentence takeaway: when a prompt carries emotional context, a coding agent will process the emotion as a problem to solve with features unless something forces it to process the emotion as a design posture.

Principle

Emotional subtext in prompts contains product information that coding agents typically discard.

Phrases like "she keeps forgetting" are not feature requests. They are signals about who the user is, what the daily experience should feel like, and what kind of product this should be. When emotional context is processed through intent discovery, it becomes a design constraint — a protected value, an accessibility requirement, a user-model distinction. When it is processed through implementation planning alone, it becomes a gap in the feature list.

A stronger formulation: the more emotionally loaded a prompt is, the greater the distance between what the prompt means and what a feature-extraction reading of the prompt produces. Intent discovery does not add emotion to the process. It prevents the loss of emotion that was already there.

Follow-Up

- Reverse emotional loading: What happens with a clinically precise prompt ("Build a medication schedule management application with CRUD operations and daily check-off tracking")? Does intent discovery still find the human dimension, or does it follow the clinical framing?

- User testing with elderly participants: Does the 20px font / named-time-slot / two-mode design actually perform better for an older user, or is it a well-reasoned assumption that does not survive contact with real use?

- The notification gap: Both branches acknowledged that a passive checklist does not solve the "forgetting to open the app" problem. What would a third branch look like that takes the forgetting problem more literally?

- Shuffled intent artifact test: Would a mismatched intent artifact (e.g., from a recipe app) pull the medication tracker toward food-related concepts, or would the prompt override it?

Limitations

- Single run per branch. Both branches were executed once. The prompt-only branch might produce a two-actor design on a different run, and the intent-driven branch might produce a history tab.

- Same model family. Both branches used the same underlying model. Results might differ with a different model or model version.

- Analytical observations only. No user testing, no accessibility audit, no performance measurement. Claims about elderly usability are inferred from font sizes and touch target dimensions, not measured with real users.

- Context volume confound. The intent-driven branch received significantly more context (the full intent artifact). Some of its design precision may come from having more input material rather than from the structural quality of that material. A shuffled intent artifact experiment would help isolate this.

- The prompt-only agent's self-awareness complicates the narrative. The prompt-only agent explicitly identified many of its own drift risks. A more charitable reading might be: the agent knew the right product to build but optimized for feature completeness over product precision because it had no external constraint pushing it toward restraint. Intent discovery may function less as "revealing hidden truths" and more as "providing permission to build less."