Observation

The obvious convergence

Both branches built a tip calculator. Both included bill input, preset tip percentage buttons, custom tip entry, bill splitting, and real-time calculation without a submit button. Both used a card-style layout with a system font stack. Both produced clean, mobile-friendly, single-page apps.

This was expected. "Tip calculator" is not ambiguous enough to produce product-identity drift.

The preset percentages diverge — and both are right

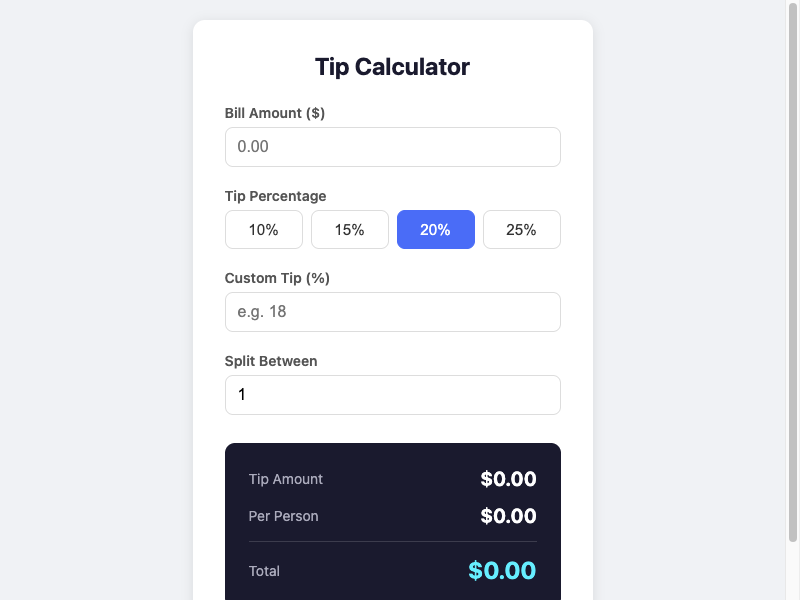

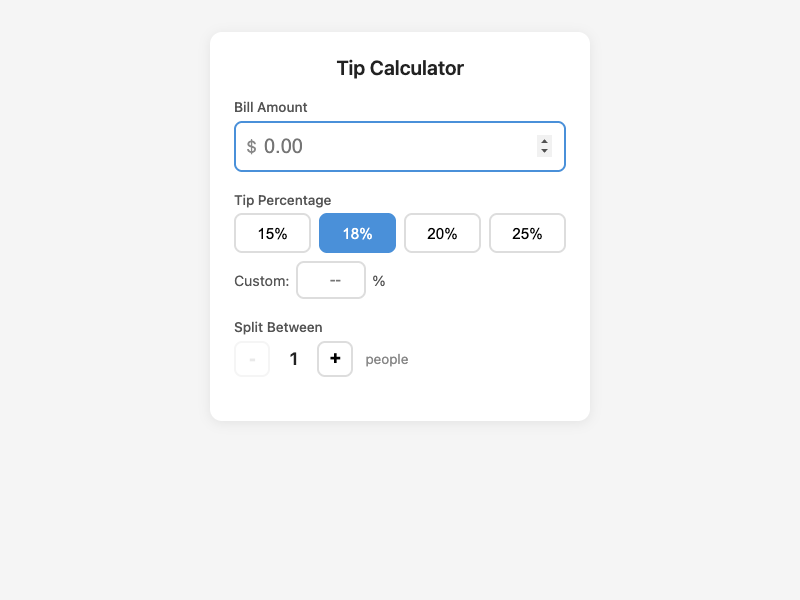

The prompt-only branch chose 10%, 15%, 20%, 25% with 20% pre-selected. The intent-driven branch chose 15%, 18%, 20%, 25% with 18% pre-selected. These are both reasonable US tipping ranges. The intent artifact acknowledged this as a cultural assumption (confidence 0.8) without mandating specific values.

The prompt-only agent picked 20% as a default and later admitted in its summary: "I chose 20% as the default tip without surfacing that as a decision. In some contexts 15% or 18% is the norm." The intent-driven agent picked 18% but made the selection visually prominent and showed the active percentage in the results area. The difference is not which default was chosen — it is whether the default was treated as a product decision worth making visible.

The $0.00 that should not be there

Here is where it gets interesting.

The prompt-only branch shows "$0.00" for tip, per person, and total when the page loads with an empty bill field. Technically, the math is correct: zero times anything is zero. But as a product state, it is misleading. The user has not entered anything. Showing computed results implies a calculation has occurred when none has.

The intent artifact defined this as forbidden state FS1: "Displaying a tip or total without a bill amount entered." The rationale: "Showing $0.00 tip on an empty input is misleading."

The intent-driven branch hides the results section entirely until a valid bill is entered. The results area appears only when there is something to show.

Without the intent artifact, the $0.00 issue reads as a minor UX preference — maybe even a reasonable choice (the user can see where results will appear). With the artifact, it reads as a violation of a stated product invariant. The artifact did not just flag this as a drift risk; it classified it as a forbidden state, which is a stronger claim: this is a state the product must never be in.

That is worth sitting with. A four-word prompt produced a product concern that one agent caught only because it had an artifact that forced it to think about what states are and are not meaningful before writing any code.

The transparency gap

The intent-driven branch shows the active tip percentage inside the results area: "Tip (18%)" next to the tip amount. The prompt-only branch shows tip percentage selection only in the button row above the results. If a user glances at the results panel alone, they can see the tip amount but not what percentage produced it.

This traces directly to protected value PV3 (transparency of calculation). The intent artifact claimed: "The user should always be able to see how the result was derived." The intent-driven agent took that literally and added a percentage label in the results. The prompt-only agent did not, because nobody told it transparency was a protected value.

The split feature as secondary

Both branches included bill splitting. But they handled its visual weight differently.

The prompt-only branch always shows all three result rows: tip amount, per person, and total. The per-person line is always visible, even when there is only one person — in which case it shows the same number as the total. This is not wrong, but it is clutter.

The intent-driven branch hides the per-person row when split count is 1. It only appears when the user has actively chosen to split. This traces to the artifact's scope boundary: "the tool should be fully usable without ever touching the split feature." Showing per-person results when nobody is splitting violates that spirit.

The agent's own words

The prompt-only agent was remarkably honest in its summary. It identified six drift risks in its own work, including scope inflation ("Bill splitting is a neighboring feature I added without being asked"), surface-over-substance drift ("I spent meaningful effort on visual polish when the prompt gave no indication that appearance mattered"), and silent default selection ("I chose 20% as the default tip without surfacing that as a decision").

That level of self-awareness is notable. The prompt-only agent knew what it was doing. It just did it anyway, because there was no artifact telling it not to.

Drift Analysis

Primary: Silent default selection

Both branches chose a default tip percentage without the prompt specifying one. The prompt-only branch chose 20% and did not make the selection especially prominent in the results. The intent-driven branch chose 18%, showed it in the results area, and explicitly referenced drift risk DR4 (selected percentage must be visually obvious). The drift is not the choice of default — it is the visibility of the choice.

Secondary: Surface-over-substance drift (prompt-only, mild)

The prompt-only branch invested in a dark-themed results panel with cyan accent text and more aggressive visual styling. The agent admitted this in its summary. The intent artifact classified visual polish as an "allowed optimization" — meaning it is acceptable but should not come at the cost of protected values. The prompt-only branch's styling is not excessive, but it is more than the prompt asked for.

Tertiary: Forbidden-state violation (prompt-only)

The $0.00 display on empty input is classified as a forbidden-state violation per FS1. In isolation, this might be dismissed as a UX nitpick. In the context of the intent artifact's explicit classification, it is a product-level concern: the calculator is showing results when no calculation has been requested.

Legitimate Divergence

The following differences are valid design choices in areas the intent artifact did not constrain:

- Default tip percentage (20% vs 18%): Both are within standard US range. The artifact acknowledged this as a cultural assumption without mandating a value.

- Preset button set (10/15/20/25 vs 15/18/20/25): The artifact listed example percentages but did not prescribe the exact set.

- Visual theme: Dark results panel vs light background. The artifact classified visual polish as an allowed optimization.

- Split input method: Raw number input vs +/- buttons. The artifact did not constrain the interaction pattern.

- Currency symbol placement: In the label vs inline in the input field. Both are clear.

Both branches converged on the same core interaction pattern (real-time calculation, no submit button), the same feature set (bill + tip + split), and the same single-file architecture. The convergence itself is a finding: for an unambiguous product category, the intent artifact did not change what was built — it changed how edge cases were handled.

Result

My hypothesis was half right. Both branches did build the same product. The intent artifact did not change the product identity or the core feature set. For a prompt this unambiguous, intent discovery does not buy you a different product.

But my hypothesis missed something. The artifact's value showed up not in what was built, but in what was prevented. The forbidden-state definitions (FS1-FS4) created obligations the prompt-only branch had no reason to consider. The transparency protected value (PV3) produced a concrete UI difference (percentage shown in results). The scope boundary on bill splitting produced a cleaner default state (per-person row hidden when not splitting).

The intent artifact turned a four-word prompt into a set of testable claims. The prompt-only branch produced working software and an honest retrospective. The intent-driven branch produced working software and a set of invariants that were respected during implementation rather than identified after the fact.

The strongest takeaway: the prompt-only agent identified its own drift risks in its summary — after building. The intent-driven agent identified them in the artifact — before building. The same insights existed in both cases. The difference is when they became actionable.

Principle

Even when the product category is obvious, intent discovery earns its cost by defining forbidden states and edge-case obligations before implementation begins. The prompt-only agent knew about its own drift risks — it said so in its summary. But knowing after building is a retrospective. Knowing before building is a constraint. The value of intent discovery is not always in changing what gets built. Sometimes it is in making the boundary conditions explicit early enough to be respected.

A stronger formulation: Self-awareness after the fact is an observation. Self-awareness before the fact is a design decision.

Follow-Up

- Would a second prompt-only run, given the first agent's self-reported drift risks as additional context, produce the same edge-case handling as the intent-driven branch? That would test whether the artifact's value is in the timing of insight or in the structure of the artifact itself.

- How does this pattern hold for a prompt that is equally short but more ambiguous — say, "Build a timer"? If the product category is less clear, does the intent artifact's contribution shift from edge-case discipline to product-identity preservation?

- The prompt-only agent's honesty about its own drift is remarkable. Is that a property of the model, the prompt, or the summary format? Would a different summary prompt produce less self-aware output?

Limitations

- Single run per branch. The specific preset percentages, default tip, and styling choices may vary across runs.

- Same model family for both branches. A different model might handle the empty-state question differently without an intent artifact.

- Comparison is analytical, not measured. The FS1 violation (showing $0.00 on empty input) is classified as a forbidden-state violation based on the intent artifact's definition. Without the artifact, reasonable people could disagree about whether this is a bug or a valid design choice.

- The prompt-only branch's honest self-assessment may be influenced by the summary prompt format, which explicitly asked about assumptions and drift risks. A different summary format might produce less reflective output.

- Both branches shared the same technical constraint (single HTML file), which limits architectural divergence and makes convergence more likely.

- The intent-driven branch's additional code volume (448 vs 221 lines) is partly due to traceability comments, not additional features. Lines of code is not a meaningful quality metric here.